Escape the Cloud Tax – Post 5: “Serve Faster. Spend Smarter. Scale Better.”

As GenAI applications move from prototype to production, inference demands are exploding — across models, hardware, and environments. But most runtime

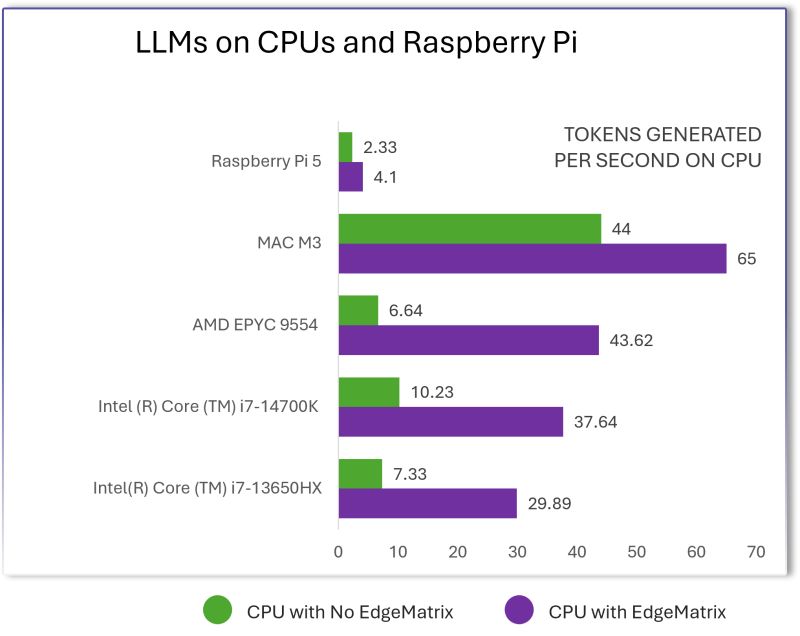

Escape the Cloud Tax – Post 4: “LLMs on CPUs, Raspberry Pi & Beyond – EdgeMatrix at the Edge”

Most people assume that to run LLMs well, you need an H100 or expensive cloud GPUs.We decided to challenge that

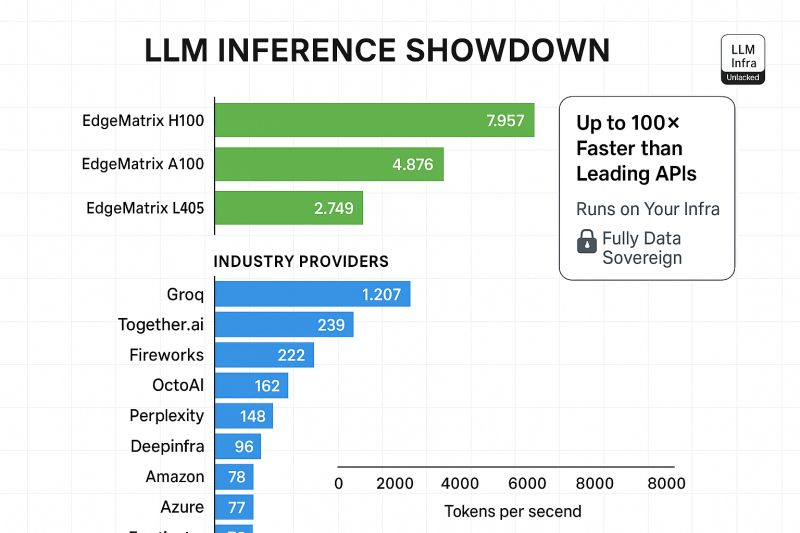

Escape the Cloud Tax – Post 3: “LLM Inference Showdown: EdgeMatrix vs the Rest”

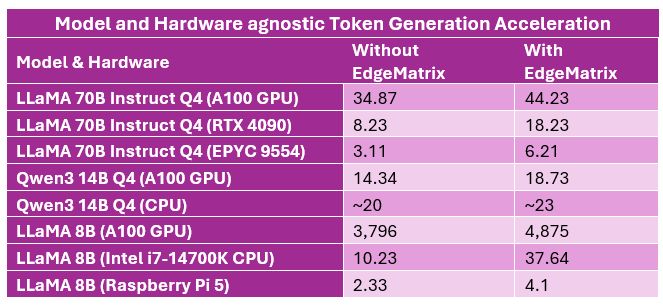

LLMs aren’t just about parameter counts anymore.When deploying LLMs at scale, performance isn’t theoretical - it’s measured in tokens per

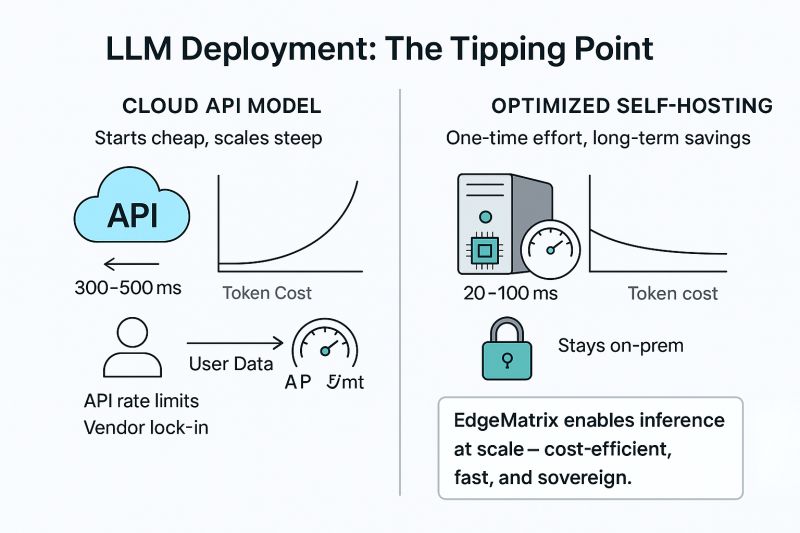

Escape the Cloud Tax – Post 2: “Cloud APIs vs Self-Hosting – Rethinking LLM Deployment at Scale”

Most teams begin their GenAI journey using cloud-based LLM APIs - and for good reason.- They're convenient- Pay-as-you-go feels cost-efficient-

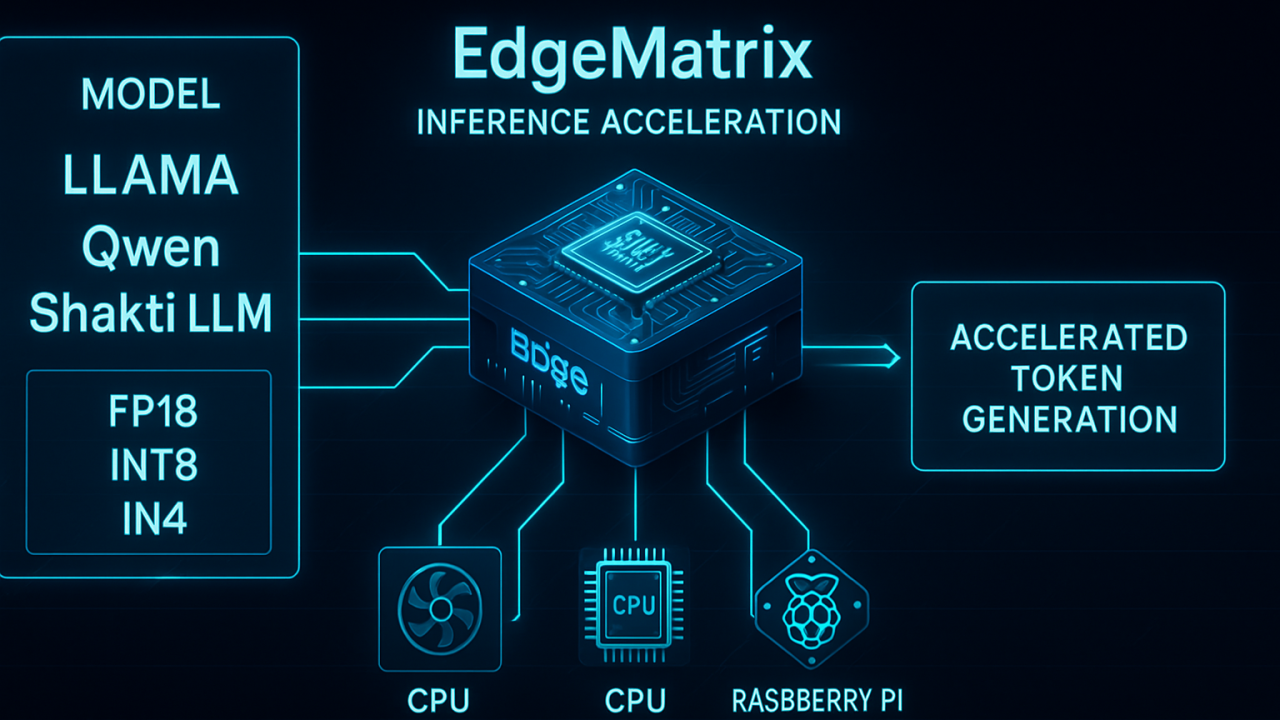

Escape the Cloud Tax – Post 1: “Why We Built EdgeMatrix: One Runtime to Rule Inference”

The cloud made AI accessible.But it also made it expensive.And slow.And

EdgeMatrix: Scaling 70B Parameter Models for Enterprise AI

Scaling to 70B Models on Mid-Range Enterprise Hardware Using our EdgeMatrix framework, we recently completed benchmarking for the meta-llama/Llama-3.3-70B-Instruct model. The

Shakti 4B’s OCR Capabilities: A Comprehensive Evaluation

In the dynamic field of Optical Character Recognition (OCR), Mistral AI's recent introduction of Mistral OCR (Mistral OCR 2503) has

How EdgeMatrix is Redefining Enterprise AI: More Performance, Less Cost

As enterprises scale their AI adoption, they face a dual challenge—delivering real-time AI performance while optimizing infrastructure costs. High-end GPUs

Shakti-4B: The Multi-Modal AI Model Powering Vision-Language Intelligence

At SandLogic, we've consistently pushed the boundaries of AI innovation across diverse modalities. With the successful launch of Shakti-1B and

Shakti-1B: A Vision-Language Model Built for Enterprise Excellence

At SandLogic, our mission is to push the boundaries of artificial intelligence by developing highly optimized language models that cater